Pinar Onal

Intention Recognition System for Adaptive Upper Limb Exoskeletons

My Role:

Research Engineer focused on integrating multimodal sensing (ultrasound, EMG, IMU) and developing machine learning models for intelligent exoskeleton control. Designed and ran human experiments, intention recognition and motion classification models, and demonstrated their applicability for adaptive control in wearable robotics.

Project Type:

Collaborative Research Project (AI4EXO) funded by European research initiatives (FOD, BOSA) in collaboration with KU Leuven

Impact

Upper-limb exoskeletons can reduce worker fatigue and improve productivity, yet most lack the ability to interpret user intent and deliver timely assistance. This project introduces a multimodal sensing framework that combines ultrasound (Tissue Doppler Imaging), EMG, and motion sensors to recognize motion intentions through muscle tissue velocity and activity patterns. Project includes ultrasound signal processing and machine learning (KNN, LSTM) to identify motion types with up to 97.8% accuracy, enabling intention recognition for wearable robots.

Skills Used

Sensors: Ultrasound, high-density EMG, IMU integration

Signal processing: Filtering, Doppler velocity tracing extraction, edge detection, feature extraction.

Machine learning modeling: KNN, LSTM, CNN-LSTM models for motion classifications

Human subject experiment: Experimental design, data acquisition, metabolic cost testing

Validation tools: OpenSim musculoskeletal modeling, Biodex assessments, metabolic cost measurements.

Idea: Ultrasound as sensing modality

While EMG and IMUs measure surface activity and motion, ultrasound reveals internal muscle dynamics, offering a direct window into how muscles contract and change shape with two implementation paths:

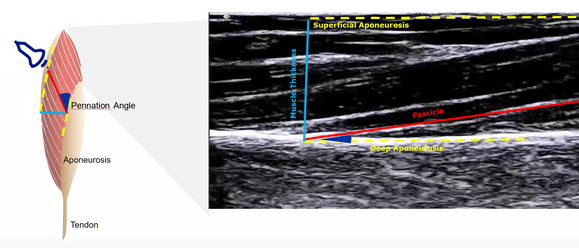

B-mode imaging, which tracks muscle structure and fascicle motion

PW Doppler Imaging which measures real-time contraction velocity. This approach introduced a novel sensing layer for more intuitive, human-centered control systems.

Experiment Designs

Participants: 10 (6 male, 4 female)

Tasks: Lifting, carrying, and overhead work to simulate repetetive tasks in industrial workplaces.

Sensors: 32-channel EMG (TMSI), IMU (Xsens), Ultrasound (Telemed)

Setup: Adjustable shelves, overhead bar, 6.2 kg load, synchronized motion video

Included metabolic cost and biomechanical evaluation to assess user effort

Technical Approach:

Multimodal Data Acquisition: Designed synchronized acquisition of HD-EMG (32 channels), ultrasound recording, and kinematics data during lifting, palletizing, overhead work tasks.

Signal Validation: Evaluated electrode reliability during motion via heat-map-based activity analysis in MATLAB; optimized probe placement on the biceps for maximum fascicle visibility and signal-to-noise ratio.

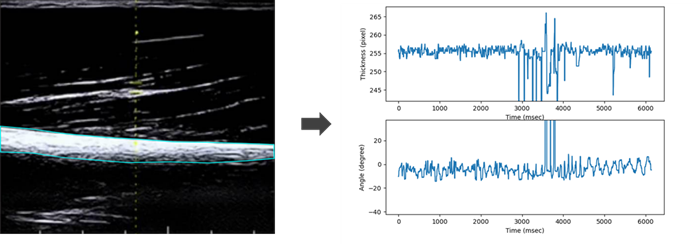

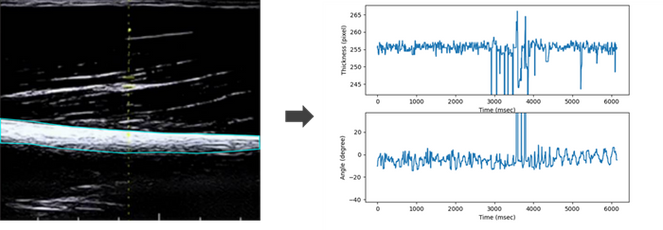

Ultrasound Modalities: Compared B-mode imaging and PW Doppler Imaging for dynamic muscle motion capture. Developed image-processing pipelines in Python using Canny edge detection and wavelet-based feature extraction to convert spectral Doppler velocity traces into time-series signals.

Machine Learning Modeling

Developed and compared models for motion classification and intent forecasting:

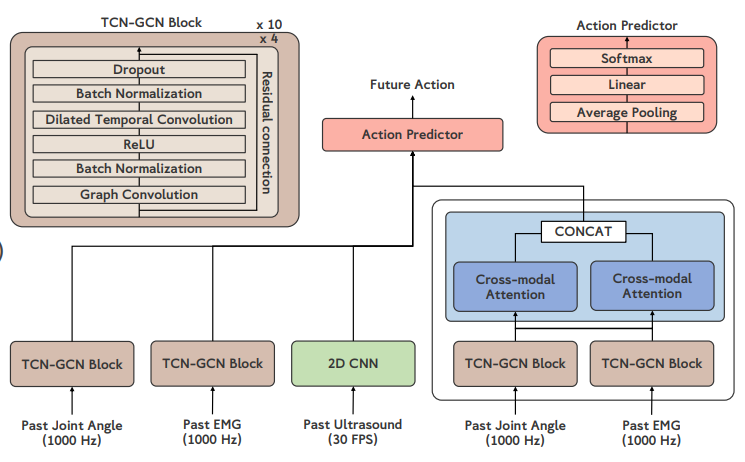

Architectures: TCN + GCN, CNN, LSTM, CNN-LSTM, and Cross-Attention Fusion

Features: EMG envelopes, Doppler velocity traces, and IMU joint angles

Goal: Predict user motion 100 ms in advance for real-time exoskeleton adaptation

Intent Forecasting Architecture

Motion Classification using PW Doppler Ultrasound

Initial tests using B-mode ultrasound images showed limited accuracy for motion recognition. During experimentation, I discovered that Pulsed-Wave Doppler signals produced distinct patterns for each task. Leveraging this, I trained KNN and LSTM models using Doppler features, showing that ultrasound velocity data can effectively classify upper-limb motions and enhance exoskeleton control precision.

Feature Selection: Applied Discrete Wavelet Transform to obtain 16 time-frequency features. Window segmentation for temporal modeling.

Machine Learning Models:

KNN (inverse Hamming & Euclidean metrics) – non-parametric classifier for small dataset robustness.

LSTM networks– temporal deep learning model for motion sequence recognition.

Implemented intra-subject (personalized) and cross-subject (generalized) classification.

Performance Metrics: Evaluated accuracy and sensitivity across motions and subjects

Results

Evaluation Type | Model | Peak Accuracy |

Intra-Subject | KNN (Hamming) | 97.8 % |

Cross-Subject | LSTM (100 hidden units) | 87.3 % |

Conference Presentations

P. Onal, K. Langlois, J. Geeroms and T. Verstraten, “Multimodal Sensing for User Intention Prediction in Upper Limb Exoskeletons” (Abstract and Poster Presentation), WearRAcon Europe Conference 2023 , Dusseldorf, Germany, 2023

P. Yang, B. Filtjens, P. Onal, K. Langlois, J. Geeroms, T. Verstraten and B. Vanrumste, “A Comparative Study of Multi-Modal Data for Human Action Forecasting in Robotic Assistive Devices” (Abstract and Poster Presentation), 21st National Day of

Biomedical Engineering, Brussels, Belgium, 2023

Submitted Manuscripts:

Validation Studies

To optimize the utilization of ultrasound for superior data quality, a methodical exploration of current best practices for muscle ultrasound was conducted including validation study with OpenSim arm26 musculoskeletal model during elbow flexion.

To analyze muscle dynamics observed in ultrasound scans, key parameters like pennation angle, location of deep aponeurosis, and fascicle lengths were calculated and compared with OpenSim simulation to get best ultrasound parameters and placement.